Drawing on co-presented work with Gwendolyn Dooley, EdD, May 2026.

Before I became a faculty member, I spent years leading teams inside knowledge-service organizations — automotive, powersports, financial services — where the difference between a capable employee and an indispensable one was rarely technical. It was almost always something harder to name and harder to develop. The ability to read a situation that did not fit a known pattern. The willingness to make a call when the data was incomplete. The confidence to push back on a recommendation that looked right but felt wrong. The capacity to stand behind a decision and explain it clearly to someone who disagreed.

Those things did not show up on a resume. They showed up, or failed to show up, when the moment required them.

I am now a doctoral candidate formally studying that gap, examining how knowledge-service organizations understand and develop workforce readiness for an AI-shaped environment. The question is not one I arrived at through a literature review. I arrived at it through years of observation — first as a practitioner, then as a faculty member, and increasingly as someone who occupies both spaces at once and cannot stop noticing what passes between them.

What I keep returning to is this: the tools now allow the assignment to be finished without the thinking that the assignment was designed to build. Which raises a question worth sitting with — are students completing credentials without fully developing the capacities those credentials are supposed to represent? And if so, is that an AI problem, or a design problem that AI has simply made impossible to ignore?

I think it is the latter. And I think it belongs to all of us.

What the Absence Looks Like

I have read the paper that stops you cold at eleven o’clock at night. It is organized. The argument holds together. The citations are formatted correctly. And something is missing that you cannot immediately name, but have been teaching long enough to recognize.

What is missing is the student.

The wrestling with a sentence that would not cooperate. The paragraph that got cut because it didn’t fit is still visible in the transition. The small moment of clarity in the conclusion that only arrives after the earlier confusion has been worked through. Those things are not stylistic. They are evidence of cognitive work, and they are how you know that the person who submitted the assignment was also the person who did the thinking.

When the AI completes the assignment, those traces are gone. The product is cleaner. The process is absent. The grade may be earned. The learning was not.

This pattern is not limited to undergraduate writing courses. The graduate student who generates a thematic synthesis without interrogating the literature has not learned to read critically. The doctoral candidate who produces a technically correct interpretation of their data without sitting with the ambiguity long enough to develop genuine scholarly insight has not done research. At every level, the form can be present while the thinking remains somewhere the student never went.

The consequences do not stay in the classroom. The undergraduate who cannot construct an argument under pressure. The professional who cannot exercise judgment in an unfamiliar situation. The leader who cannot explain their reasoning to a room full of people who are not convinced. These are not hypothetical outcomes. They are patterns I observed across years of leading teams, watching what happens when academic preparation meets professional expectation.

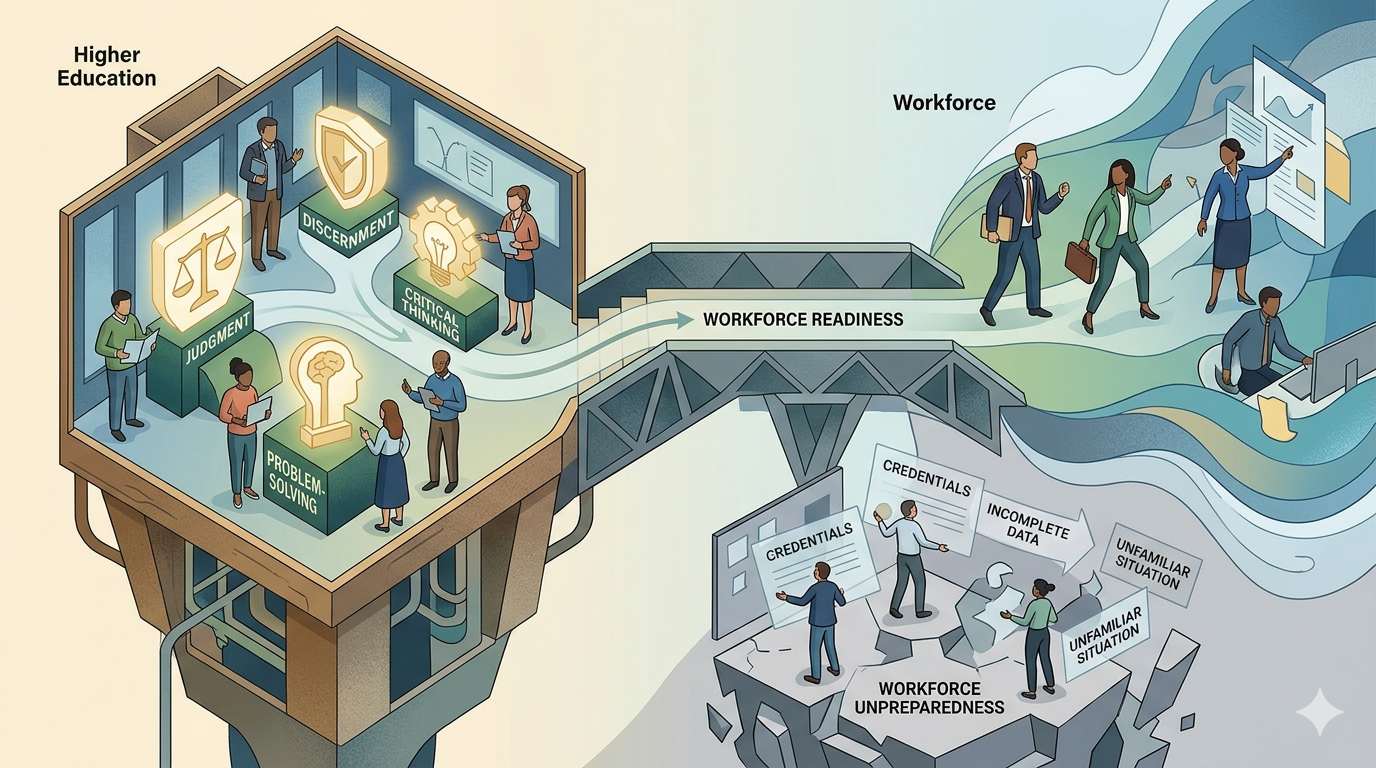

The Four Things Higher Education Must Still Develop

The four capacities that appear most consistently in the gap between what higher education aims to develop and what the workplace reveals are missing are worth naming plainly.

Judgment is the ability to weigh what you know against what you do not know and make a decision you can defend. It is built through practice, and specifically through the experience of being wrong, recognizing why, and adjusting. It cannot be outsourced to a system that does not carry the weight of consequences.

Discernment is the ability to distinguish what is credible from what merely sounds credible, what is relevant from what is merely available, and what represents original thinking from what is a confident recombination of things that already existed. At its most dangerous, AI output undermines discernment invisibly. It sounds authoritative. It cites plausibly. It reasons fluently. Right up until it does not.

Critical thinking is the ability to examine an argument, including your own, for the quality of its structure, its assumptions, and its evidence. It is what makes it possible for a student to say “this conclusion does not follow” rather than accepting what is in front of them because it arrived with confidence. That capacity is not a subject. It is a practice that requires repeated practice to develop.

Problem-solving, in the sense that matters here, is not executing a known method. It is working through something genuinely uncertain — trying an approach, evaluating what happens, revising, and persisting — without a prompt telling you what to do next and without a template defining what done looks like.

Higher education has always been the place where these capacities are supposed to develop. Not incidentally. Intentionally. They are the reason the credential is supposed to carry weight with an employer, a graduate program, or a community. What happens when students move through degree programs without fully developing themselves? Does the credential outlast the preparation it was meant to represent? That is not a new question. But AI has made it faster and more visible — and harder to defer.

What Faculty Still Own

I want to be precise here, because this conversation goes sideways when it becomes about banning tools rather than protecting outcomes.

The question is not whether students should use AI. Many of them will. Many of them already do, for everything from drafting to research to feedback. The question is: what must faculty insist upon, regardless of the tools involved, is evidence that the student was present in the thinking, not just in the submission.

In work I co-presented with Dr. Gwendolyn Dooley on AI as a research assistant, we framed faculty AI use around four questions: verify, align, protect, and personalize. The same framework applies to what we ask of students. Did they verify the output against what they actually know? Did they align it to the specific context of the assignment, the course, and their argument? Did they protect the integrity of the work by being accountable for what they submitted? Did they personalize it — leave traces of themselves in the thinking rather than removing them?

An assignment that requires a real conversation cannot be completed by an AI that has not had one. An analysis that demands a student defend their reasoning to a skeptical reader cannot be passed off by a student who does not understand what they submitted. A reflection that asks where the student changed their mind this semester is genuinely hard to fabricate for someone who was not there.

Design toward the human. That is not about making AI impossible. It is about making the thinking visible.

The Honest Conversation About Who This Applies To

I hold both of these roles simultaneously, and I want to acknowledge that directly.

As a faculty member, I am asking students to develop capacities that I am still developing myself. My doctoral work is, among other things, an extended exercise in sitting with ambiguity, exercising judgment when the evidence is incomplete, and building an argument I can defend under questioning. I know what it feels like to want a shortcut. I also know what it costs.

As a doctoral candidate formally studying AI and workforce readiness, I am in a somewhat unusual position: pursuing questions I have already been living with for years. What I observed in organizations as a practitioner, and what I observe in classrooms as a faculty member, keeps pointing to the same place: the people who navigate AI-saturated environments most effectively are not primarily the ones who have learned to prompt well. They are the ones who know when the output is wrong, when the recommendation should be questioned, and when the situation requires something the tool cannot supply.

That knowing is not technical. It is judgment. It is discernment. It is everything that has to be built before it can be applied. And it is built, or not built, in the space that higher education either protects or surrenders.

The urgency of this moment in higher education is real. Shifts in AI capability are accelerating faster than institutions can revise their policies, faster than faculty can redesign their assignments, faster than students can be taught to navigate what is now in front of them. This is not a solitary observation; it has been named by the technology industry (Shumer, 2026) and, specifically, by doctoral education (Markette & Markette, 2026), but the classroom consequences are for faculty to address. Those who adapt thoughtfully will be positioned to lead. Those who wait for the environment to stabilize before responding may find it has changed beyond the point where waiting still makes sense.

The cheese, to borrow from a useful if familiar parable, has already moved (Johnson, 1998). The question worth spending energy on is not where it went. It is what we choose to protect as we move through the maze to find it.

What we need to protect is not difficult to name. It is the capacity to think for yourself, to evaluate what is in front of you, to stand behind your reasoning, and to remain accountable for the work. Those are not soft skills. They are the foundational competencies that allow everything else to function. And they can only be developed in the space between not knowing and knowing — the space that, when AI is misused, simply closes without teaching anyone anything.

Faculty who design toward that space, who require evidence of thinking rather than evidence of completion, who model judgment rather than outsourcing it, are not fighting AI. They are doing exactly what they were hired to do. Teaching. In the deepest sense of the word.

That has not changed. It will not change. And it may be the most important thing we do right now.

The Faculty AI Framework: Four Questions Before Every AI-Assisted Interaction

Verify. Have you checked this output for accuracy, invented citations, outdated information, and alignment with the assignment before it reaches a student? AI generates confidently even when it is wrong. Catching what the algorithm missed is a non-negotiable first step.

Align. Does this response connect to the rubric, the course learning outcomes, and where this specific student is in their development? AI does not know your student. You do. That knowledge must shape every interaction.

Protect. Have you removed all identifiable student information before engaging any AI tool? Names, institutional details, personal disclosures, and draft content belong to the student and are not to be shared with any third-party system.

Personalize. Does this sound like a faculty member who knows this student, or like a system that processed their submission? The teaching voice, specific and human and accountable to the relationship, is what no algorithm can supply and what every student deserves.

AI assists. Faculty decides.

References

Johnson, S. (1998). Who moved my cheese? An amazing way to deal with change in your work and in your life. G.P. Putnam’s Sons. https://ia800305.us.archive.org/17/items/WhoMovedMyCheese_201604/Who%20Moved%20My%20Cheese.pdf

Markette, N., & Markette, J. (2026, February). Why “Something Big Is Happening” means your doctorate needs to change — now [Video]. YouTube. https://www.youtube.com/watch?v=NRcCMCaUids

Shumer, M. (2026, February 11). Something big is happening: AI’s February 2020 moment. Fortune. https://fortune.com/2026/02/11/something-big-is-happening-ai-february-2020-moment-matt-shumer/

Lynn F. Austin is a doctoral candidate researching Strategic Workforce Readiness for Artificial Intelligence in Knowledge-Service Organizations and an award-winning faculty member with leadership experience at Fortune 100 companies in automotive, powersports, and financial services. She serves with a focus on AI-integrated pedagogy, inclusive course design, and faculty development. She is a contributing author to Teach Smarter, Not Harder: Shaping Education in the Digital Age, forthcoming.